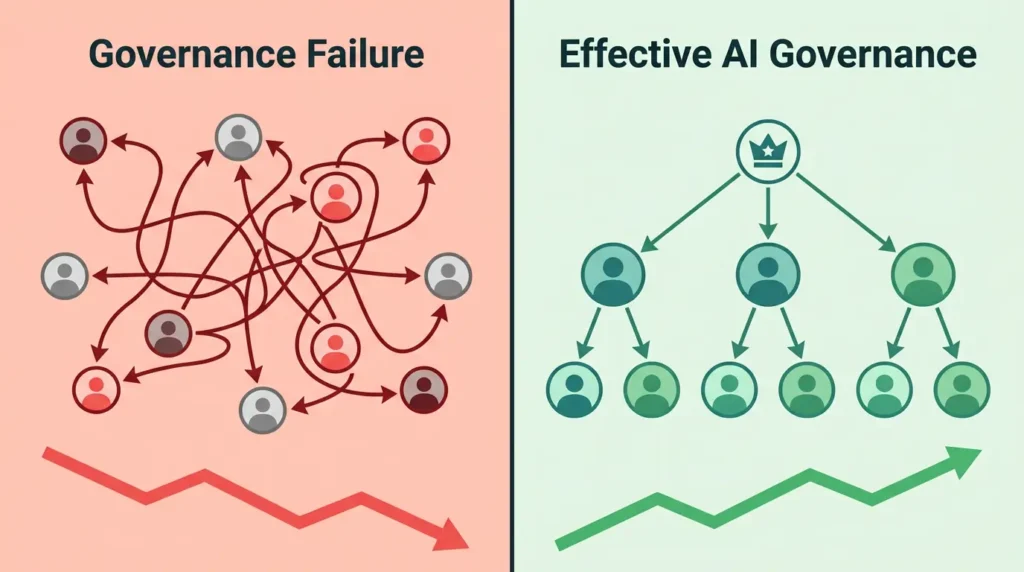

AI transformation fails more often due to governance gaps than technology limitations. When organizations deploy AI without clear accountability structures, defined ownership, risk management policies, or ethical oversight, even technically advanced systems create confusion rather than value. Governance in AI transformation means establishing who decides, who monitors, who is responsible, and what guardrails exist before deployment, not after. Organizations that treat governance as a strategic priority consistently achieve better AI outcomes, higher ROI, and more sustainable scaling.

Introduction

Approximately 70% of enterprise AI projects fail, and the ai adoption failure rate is not driven by weak models or insufficient computing power. The technology works. The organization around it does not.

AI transformation is a problem of governance, and that recognition changes everything about how leaders should approach their digital transformation strategy. Companies that invest heavily in AI tools without building accountability structures, oversight mechanisms, and ethical guardrails consistently face the same ai transformation challenges: pilots that never scale, ungoverned systems that produce unreliable outputs, and transformation programs that stall despite genuine technical capability.

In fact, 78% of organizations now use AI in their operations, yet only 14% have enterprise-level AI governance frameworks in place. That gap between adoption speed and governance readiness is the defining organizational challenge of 2026.

This article defines what governance means in the AI context, explains the root causes of the governance gap, shows what poor enterprise ai governance looks like in practice using a real case example, and introduces the RADAR Governance Framework, a five-component model any organization can apply to build responsible ai governance immediately.

What Does “AI Transformation Is a Problem of Governance” Mean?

When people say AI transformation is a problem of governance, they mean that the primary barrier to successful AI adoption is not technical capability. It is the absence of clear organizational structures: defined roles, ownership, accountability mechanisms, and ethical decision-making processes that allow AI to function safely and strategically at scale inside real organizations.

In the AI transformation context, governance refers to the policies, processes, rules, and human structures that determine how AI is developed, deployed, monitored, and corrected within an organization. It answers the questions that technology alone cannot answer. Who owns this AI system? Who is accountable when it produces a wrong decision? What data can it access? What guardrails prevent misuse? Who reviews its outputs over time?

Data governance AI integration sits at the center of this challenge. Most organizations manage their data infrastructure separately from their AI deployment programs, creating blind spots where AI systems operate on data that has no clear ownership, access controls, or quality standards. This separation is a governance problem, not a technical one.

Without clear answers to these foundational questions, AI runs. But no one governs it. Power without control creates risk rather than value, and that is exactly the gap that organizational accountability structures are designed to close.

Key Definition: AI governance is the structured set of accountability mechanisms, policies, roles, and oversight processes that ensure AI systems operate in alignment with organizational goals, regulatory requirements, and ethical standards across their full lifecycle from approval through retirement.

Why Do Most AI Projects Fail? The Governance Gap Explained

Approximately 70% of enterprise AI projects fail not because of technology limitations, but because of governance gaps including unclear accountability, inadequate oversight mechanisms, and misaligned organizational processes. Understanding why do AI projects fail in organizations requires looking past the technology layer and examining the organizational structures that either support or undermine AI at scale.

The ai adoption failure rate is consistent and well-documented. Only 20 to 25% of AI initiatives ever reach full production deployment. Fewer than 5% deliver measurable return on investment despite successful proof-of-concept results. A 2025 benchmark report found that 58% of leaders identify disconnected governance systems as the primary obstacle preventing them from scaling AI responsibly.

Separate findings confirm that 42% of companies abandoned most AI initiatives in 2025, up sharply from 17% in 2024, and the average company scrapped 46% of proof-of-concept projects before reaching production. These ai project failure reasons share a common thread. Organizations treat AI transformation as a technology project and assign ownership to IT or data science teams. Those teams solve technical problems effectively. They do not set organizational policy, define ethical guardrails, or manage ai strategy alignment with business objectives.

Tools like predictive analytics can surface powerful data-driven insights and forecast organizational risks with precision. But even the most sophisticated predictive models require governance structures to determine how those insights are used, who acts on them, which teams are accountable for outcomes, and how results are reviewed and audited over time.

Common Symptoms of AI Governance Failure

Organizations experiencing AI scaling problems typically show several recognizable patterns of governance failure:

- AI projects succeed in proof-of-concept phases but never receive organizational approval to scale across business units

- Multiple teams claim partial ownership of the same AI system, meaning no single person carries full accountability for its performance or compliance

- AI outputs influence consequential decisions in pricing, hiring, lending, or customer experience but no formal review process or audit trail exists

- Ethical concerns about algorithmic accountability and AI bias are raised informally but never addressed through a structured AI ethics policy

- Compliance and legal teams are unaware of which AI systems are active and what data they process

- AI deployment challenges emerge when model performance degrades silently because no monitoring process was built at the time of deployment

- Employees use unauthorized AI tools at work because no official responsible AI principles or acceptable use guidelines have been communicated

Each of these symptoms points to the same root cause. The digital transformation governance infrastructure was never built alongside the technology.

Real Case Example: When AI Governance Failure Becomes Expensive

The following example illustrates what ai transformation governance problems look like in practice. Details have been anonymized to reflect a composite of publicly documented governance failure patterns.

A mid-size financial services organization deployed an AI-powered credit risk scoring system in early 2024 without establishing a formal governance owner, risk classification process, or model monitoring schedule. The technical team successfully built and tested the model. No cross-functional oversight committee reviewed the deployment. No AI ethics policy governed what data the model could access or how its outputs would be audited.

Within nine months, the model had drifted significantly from its original training distribution, producing a 31% increase in false risk flags for applicants from specific demographic segments. Because no model monitoring process existed, no one detected the drift. Because no governance owner was defined, no one was accountable for reviewing outputs. Because no AI impact assessment had been conducted, the organization had no documentation to demonstrate due diligence when a regulatory inquiry was opened.

The result was a $1.4 million compliance investigation cost, a mandatory model suspension lasting four months, and reputational damage that affected client retention in the following quarter.

The AI system itself worked as designed. Every failure that followed was a governance failure, not a technology failure. This pattern is not exceptional. It is the norm for organizations that skip the governance infrastructure work during AI deployment.

As new work models emerge, including programs examined in our coverage of the AI-driven reduced workweek, governance questions become even more urgent. When AI automates workflows during compressed working hours, the question of who monitors outputs, who holds ai accountability and oversight, and how responsible AI principles are maintained with reduced human review time becomes a strategic priority, not a secondary concern.

What Is AI Governance?

An AI governance framework is a structured set of policies, roles, processes, and accountability mechanisms that guide how an organization develops, deploys, monitors, and audits its AI systems. It is not a compliance checklist. It is a strategic capability that determines whether AI transformation delivers sustainable value or creates unsustainable organizational and regulatory risk.

Recognized bodies including the OECD, the World Economic Forum, and McKinsey Global Institute have consistently identified governance maturity as the primary differentiator between organizations that scale AI successfully and those that stall. MIT Sloan Management Review research reinforces this finding: organizations achieving measurable AI ROI are those that invested in governance infrastructure before scaling deployment.

Effective enterprise ai governance is built on four core pillars:

- Accountability: Every AI system must have a clearly named owner responsible for its performance, outputs, and compliance. Clear ai accountability and oversight prevents the diffuse ownership problem that causes most governance failures.

- Transparency: Stakeholders across the organization must be able to understand what AI systems do, what data they consume, and how decisions are made. AI decision-making accountability depends entirely on this visibility.

- Risk Management: Organizations must classify AI use cases by risk level and apply proportional controls. High-risk AI systems that affect hiring, credit, health, or safety require significantly stronger model risk governance than low-risk internal automation tools. This risk tiering approach is the foundation of responsible AI principles and is now codified in international standards.

- Regulatory Compliance: AI governance must align with the EU AI Act, the NIST AI Risk Management Framework, and ISO/IEC 42001. Organizations adopting ISO/IEC 42001 in 2025 and 2026 are building formal model inventories, conducting AI impact assessments, and establishing cross-functional governance committees with direct executive sponsorship.

How Does Poor AI Governance Show Up in Real Organizations?

Poor AI governance rarely presents as one dramatic failure event. It accumulates quietly through a series of organizational gaps, each small enough to ignore individually but serious enough collectively to cause AI transformation programs to stall, collapse, or produce harmful outputs that damage stakeholder trust and regulatory standing.

The following comparison illustrates the real-world difference between governance-weak and governance-strong AI environments:

| Governance Gap | What It Looks Like in Practice | Why It Creates Serious Risk |

| No defined AI owner | Multiple teams claim or avoid responsibility for the same system | Problems go unaddressed because no one has clear authority to act |

| Missing risk classification | High-risk and low-risk AI applications receive identical treatment | Compliance violations, ethical failures, and regulatory penalties accumulate |

| Absent executive oversight committee | AI decisions are made at team level without leadership review | Strategic misalignment between AI activity and organizational objectives |

| No model monitoring process | AI systems run unchecked for months or years after deployment | Model drift, bias accumulation, and silent performance degradation |

| Absent AI ethics policy | AI deployed in sensitive domains without formal ethical review | Discrimination risks, privacy violations, and reputational damage compound |

| No data governance AI integration | AI accesses data with no ownership, access controls, or quality standards | Unreliable outputs, privacy violations, and data lineage failures |

| Siloed team structure | Data science, legal, and compliance teams work on AI independently | No unified responsible AI principles, inconsistent controls organization-wide |

This table describes the operational reality inside most organizations currently experiencing ai deployment challenges. The technology was deployed. The governance was not built alongside it.

The RADAR Governance Framework: How to Build AI Governance in an Organization

Moving from theory to practice requires a clear structure. Introducing the RADAR Governance Framework, a five-component model for building sustainable enterprise ai governance at any stage of AI maturity.

RADAR stands for: Responsibility, Accountability, Data Control, Audit Readiness, and Regulatory Alignment. These five components address the full lifecycle of AI governance from initial deployment through continuous monitoring and compliance documentation.

R: Responsibility

Define a named governance owner for every AI system in production before deployment begins. Responsibility assignment eliminates the ambiguity that creates ai scaling problems and ensures someone is always accountable for system performance, ethical compliance, and business alignment.

A: Accountability

Establish an executive AI oversight committee with cross-functional representation from legal, compliance, technology, operations, and business leadership. Accountability at the leadership level is the single most consistent differentiator between AI programs that scale and those that stall. Organizations with executive-sponsored AI governance deploy AI 40% faster and achieve 30% better ROI than those without.

D: Data Control

Integrate data governance AI practices into every AI deployment. Define what data each AI system can access, establish data quality standards, document data lineage, and enforce access controls. Data control is not a secondary governance task. It is the foundation on which ai strategy alignment and model reliability depend.

A: Audit Readiness

Build model monitoring and scheduled audit processes into every AI deployment from day one. Audit readiness means maintaining documentation that demonstrates how the AI system was built, what data it uses, how it performs over time, and how errors are detected and corrected. This documentation is now a legal requirement under the EU AI Act for high-risk AI applications.

R: Regulatory Alignment

Map every AI system in your model inventory against applicable regulatory frameworks including the EU AI Act, ISO/IEC 42001, and the NIST AI Risk Management Framework. Regulatory alignment is not a one-time compliance exercise. It is a continuous governance function that must adapt as regulations evolve across jurisdictions. Over 65 nations have now published national AI strategies, and the regulatory landscape will continue tightening through 2026 and beyond.

Why Is AI Governance a Strategic Priority, Not Just Compliance?

AI governance has shifted from a legal checkbox to a competitive differentiator. Organizations with mature governance frameworks deploy AI faster, scale more reliably, and earn more stakeholder trust, while competitors stuck in governance debt face audit risk, project abandonment, and reputational damage from ungoverned systems that produced discriminatory, inaccurate, or legally problematic outputs.

The World Economic Forum identified governance maturity as a top organizational priority for responsible AI deployment in its 2025 technology outlook. The OECD confirmed that even in public sector contexts, AI use is growing but has not achieved transformative impact primarily because implementation challenges related to algorithmic accountability and organizational oversight remain unresolved.

AI transformation failure statistics from 2025 and 2026 point consistently to the same conclusion. The organizations winning at AI transformation are not those with the most advanced AI systems. They are the organizations that built the governance infrastructure to manage, monitor, and scale those systems responsibly.

Teams spending 56% of their time on manual governance-related activities due to absent automation and poor governance design represent a structural drain on AI talent and innovation capacity. That is more than half of an organization’s AI resources focused on compliance paperwork instead of value creation. Building the RADAR Governance Framework eliminates this inefficiency by making governance a designed system rather than a reactive burden.

Governance Is the Foundation, Not the Obstacle

AI transformation is a problem of governance, and the organizations that recognize this reality early hold a measurable and growing advantage. The ai transformation failure statistics are consistent across industries and geographies. AI project failure rates above 70%, scaling rates below 25%, and ROI delivery rates below 5% are not produced by weak technology. They are produced by organizational accountability gaps, absent digital transformation governance structures, missing AI ethics policy frameworks, and leadership teams that delegated governance responsibility without building the infrastructure to support it.

The RADAR Governance Framework gives every organization, regardless of current AI maturity level, a clear starting structure. Build Responsibility by naming AI owners. Build Accountability through executive oversight. Build Data Control through integrated data governance AI practices. Build Audit Readiness through model monitoring and documentation. Build Regulatory Alignment through ISO/IEC 42001, the NIST AI Risk Management Framework, and EU AI Act compliance.

Governance does not slow AI transformation. It is what makes transformation possible, sustainable, and trustworthy. And as AI reshapes not just how organizations operate but how individuals manage their digital identities, data privacy, and online communications, every layer of accountability matters. For a simple and immediate step in protecting your personal data and online identity, try freemail.ai, a free temporary email service designed for secure, private digital communication.

FAQ: AI Transformation Governance Problems and Solutions

What does it mean that AI transformation is a problem of governance?

It means the primary reason AI transformations fail is not weak technology. It is the absence of clear organizational structures: who owns AI systems, who is accountable for outputs, what ethical guardrails exist, and what rules govern how AI is used at scale. Without those structures, even powerful AI creates confusion instead of business value. The technology is ready. The governance is not

Why do so many AI projects fail to scale?

Most AI projects fail to scale because they are treated as technology experiments rather than organizational transformations. Without governance frameworks covering defined ownership, risk classification, and executive oversight, AI pilots succeed in isolation but cannot be deployed safely across the broader organization. Only 20 to 25% of AI initiatives ever reach full production deployment despite high initial investment.

What is an AI governance framework and what does it include?

An AI governance framework is a structured set of policies, roles, processes, and accountability mechanisms that guide how an organization develops, deploys, monitors, and audits its AI systems. Core components include accountability structures, transparency standards, risk classification, and regulatory compliance alignment. Recognized standards include ISO/IEC 42001 for AI management systems and the NIST AI Risk Management Framework.

Can you give examples of AI transformation governance problems?

Common AI transformation governance problems include: AI pilots that never scale due to unclear ownership, consequential decisions made by AI with no human review process, compliance teams unaware of active AI tools, models producing biased outputs with no correction mechanism, and executives making AI strategy decisions without understanding the risk profile of deployed systems. Amazon, Workday, and SafeRent are publicly documented examples of organizations that experienced costly governance failures despite functional AI technology.

What role does leadership play in AI governance?

Leadership is central to responsible ai governance. Executives must define ai strategy alignment with business objectives, establish oversight committees, allocate governance resources, and take organizational accountability for high-risk AI decisions. Without C-suite ownership, AI governance remains a compliance exercise rather than a strategic capability. Organizations with executive-sponsored AI governance deploy AI 40% faster and achieve 30% better ROI than those without structured leadership oversigh

How do organizations start building AI governance?

Organizations begin with three practical steps. First, audit all existing AI use across every business unit to establish a complete model inventory. Second, define clear ownership and ai accountability and oversight for each system in production. Third, establish a cross-functional AI oversight committee with direct executive sponsorship. The RADAR Governance Framework, covering Responsibility, Accountability, Data Control, Audit Readiness, and Regulatory Alignment, provides a structured path toward a complete, auditable AI management system.